For me, the creepiness factor of Apple’s “Vision Pro” is off the charts. It’s not just the look, but all the personal biomatric data it collects and processes—ostensibly in the name of maintaining your personal security.

While it’s still a far cry from the device described in the final paragraphs below, I can easily see it ending up there at some point in the not-too-distant future.

A post I made in 2017 (skip to the “Brain Waves” section toward the end if you want to skip all the stereo geek stuff):

The Future of High Fidelity

I was cleaning stuff out over the weekend and ran across a file folder full of clippings I’d kept from various sources over the years. I was a big hi-fi geek in high school and college, and one of the articles I kept that I’d always loved was a bit of fiction from the mind of Larry Klein, published July 1977 in the magazine Stereo Review, describing the history of audio reproduction as told from a future perspective. Since the piece was written many years in advance of the personal computer revolution, the author was wildly off-base with some of his ideas, but others have manifested so close in concept—if not exact form—that I can’t help but wonder if many young engineers of the day took them to heart in order to bring them to fruition.

And I would be very surprised indeed if one or more of the writers of Brainstorm had not read the section on neural implants, if only in passing…

Two Hundred Years of Recording

The fact that this year, 2077, is the Bicentennial of sound recording has gone virtually unnoticed. The reason is clear: electronic recording in all its manifestations so pervades our everyday lives that it is difficult to see it as a separate art or science, or even in any kind of historical perspective. There is, nevertheless, an unbroken evolutionary chain linking today’s “encee” experience and Edison’s successful first attempt to emboss a nursery rhyme on a tinfoil-coated cylinder.

Elsewhere in this Transfax printout you will find an article from our archives dealing with the first one hundred years of recording. Although today’s record/reproduce technology has literally nothing in common with those first primitive, mechanical attempts to preserve a sonic experience, it is instruction from a historical and philosophical perspective to examine the development of what was to become known as “high fidelity.”

Primitive Audio

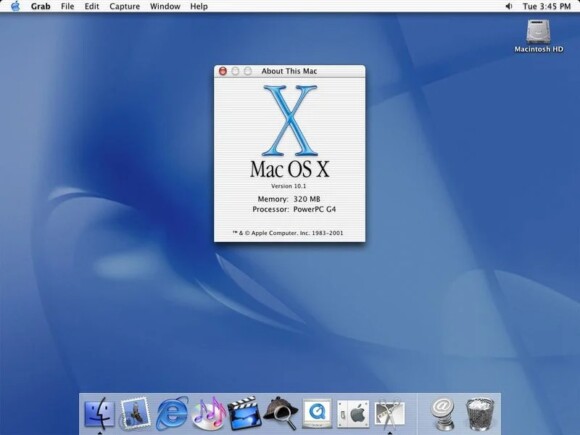

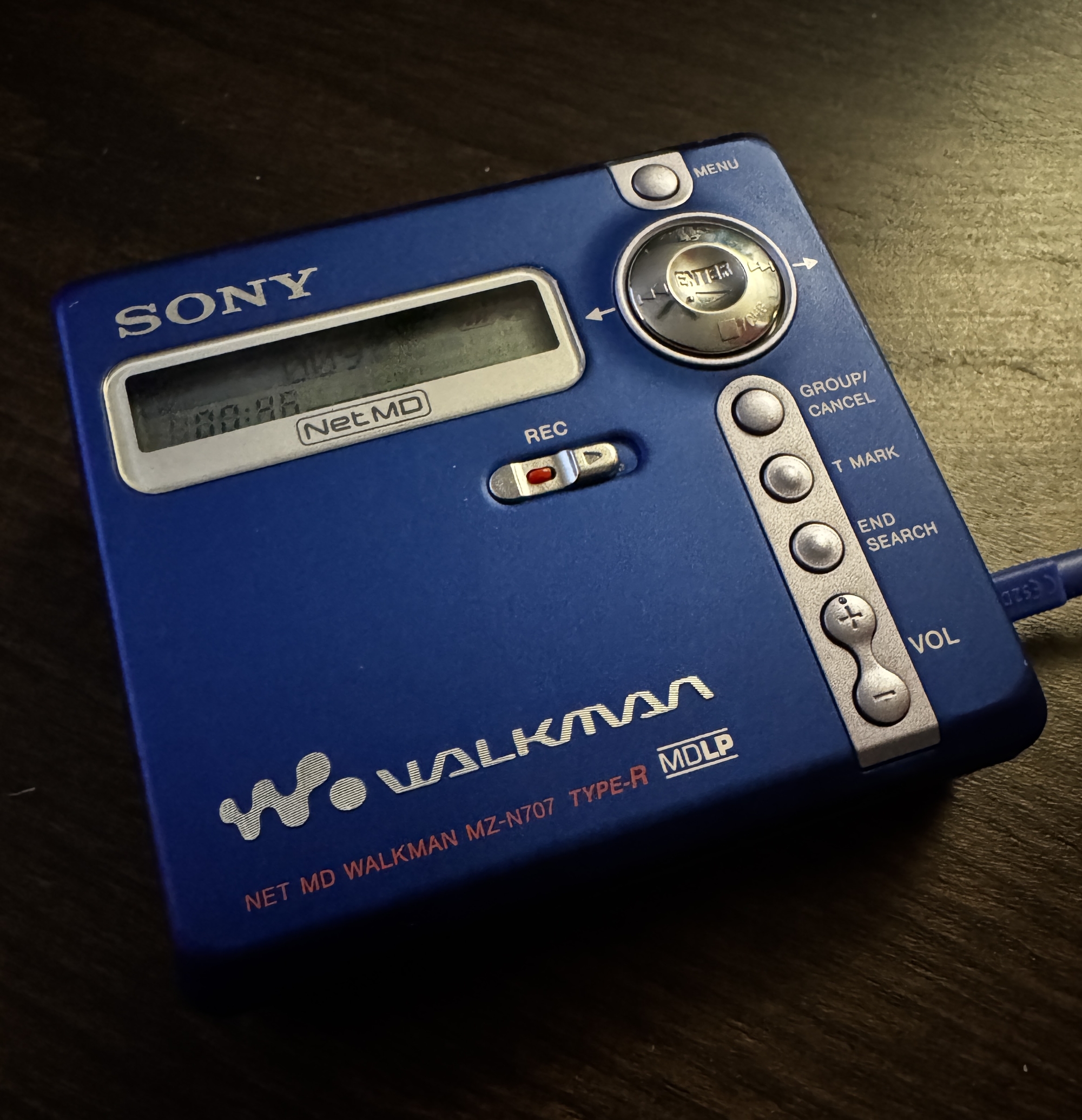

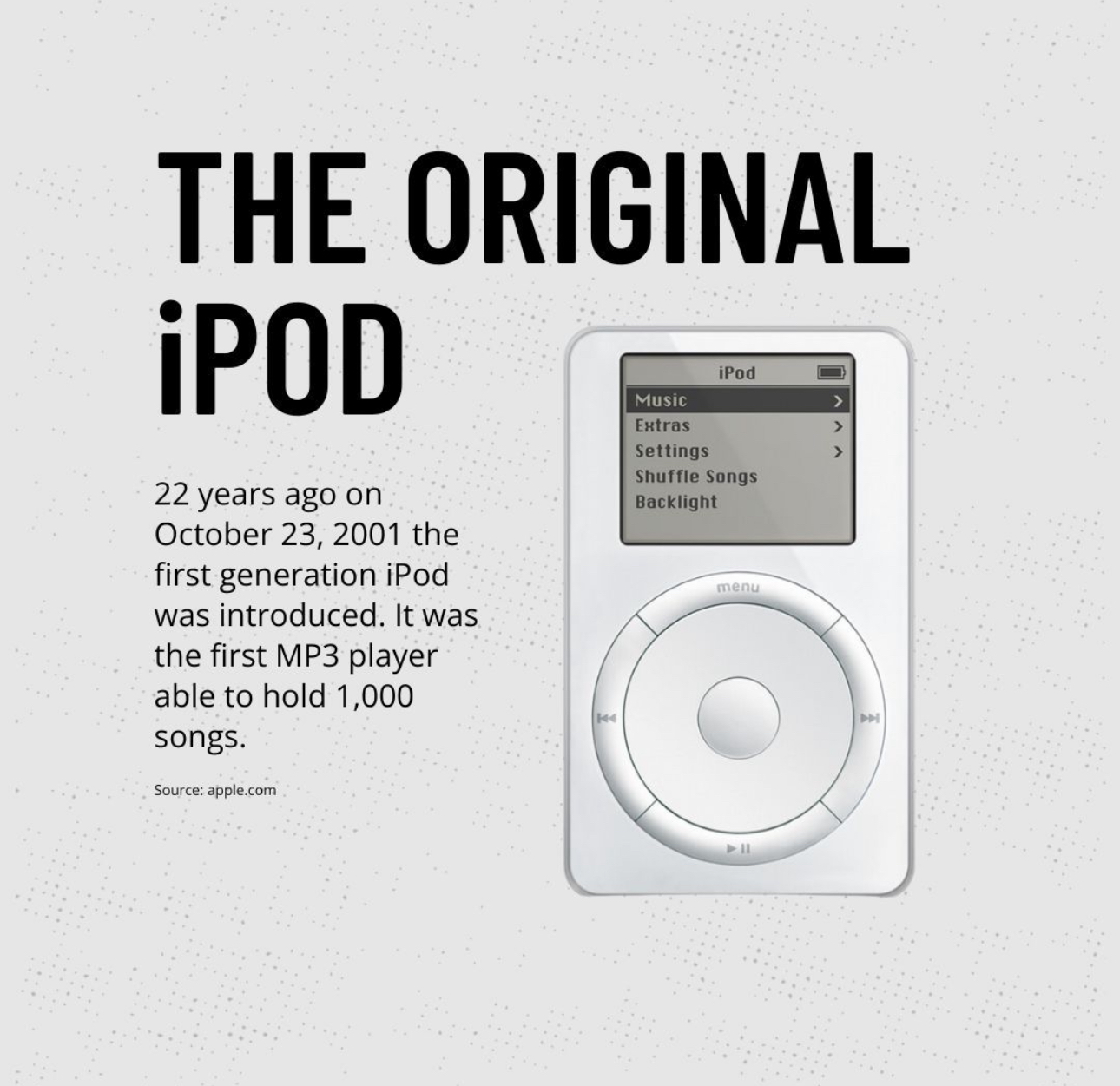

It is clear from the writings of the time that the period just after the year 1950 was the turning point for sound reproduction. For a variety of sociological, economic, and technological reasons, the pursuit of accurate sound reproduction suddenly evolved from the passionate pastime of a few engineers and Bell Laboratories scientists into a multimillion-dollar industry. In the space of only fifteen years, “hi-fi” became virtually a mass-market commodity and certainly a household term. In the late 1970s, the first primitive microprocessors (miniature computer type logic-plus-memory devices) appeared in home audio equipment. These permitted the user to program was was known as an “FM tuner,” record player,” or “tape recorder” to follow a certain procedure in delivering broadcast or recorded material.

For those who are not collectors of those antique audio devices, which employed “records” or “tapes,” such terms require explanation. From its earliest beginning, recording employed an analog technique. This means that whatever sound was to be preserved and subsequently reproduced was converted to an equivalent corresponding mechanical irregularity on a surface. When playback was desired, this irregularity was detected or “read” by a mechanical sensing device and directly (later, indirectly) reconverted into sound. It may be difficult to believe, but if, say, a middle-A tone (which corresponds to air vibrating at a rate of 440 times per second) was recorded, the signatl would actually consists of a series of undulations or bumps which would be made to travel under a very fine-pointed stylus at a rate of 440 undulations per second. Looking back from a present-day perspective, it seems a wonder that this sort of crude mechanical technique worked at all—and a veritable miracle that it worked as well as it did.

The End of Analog

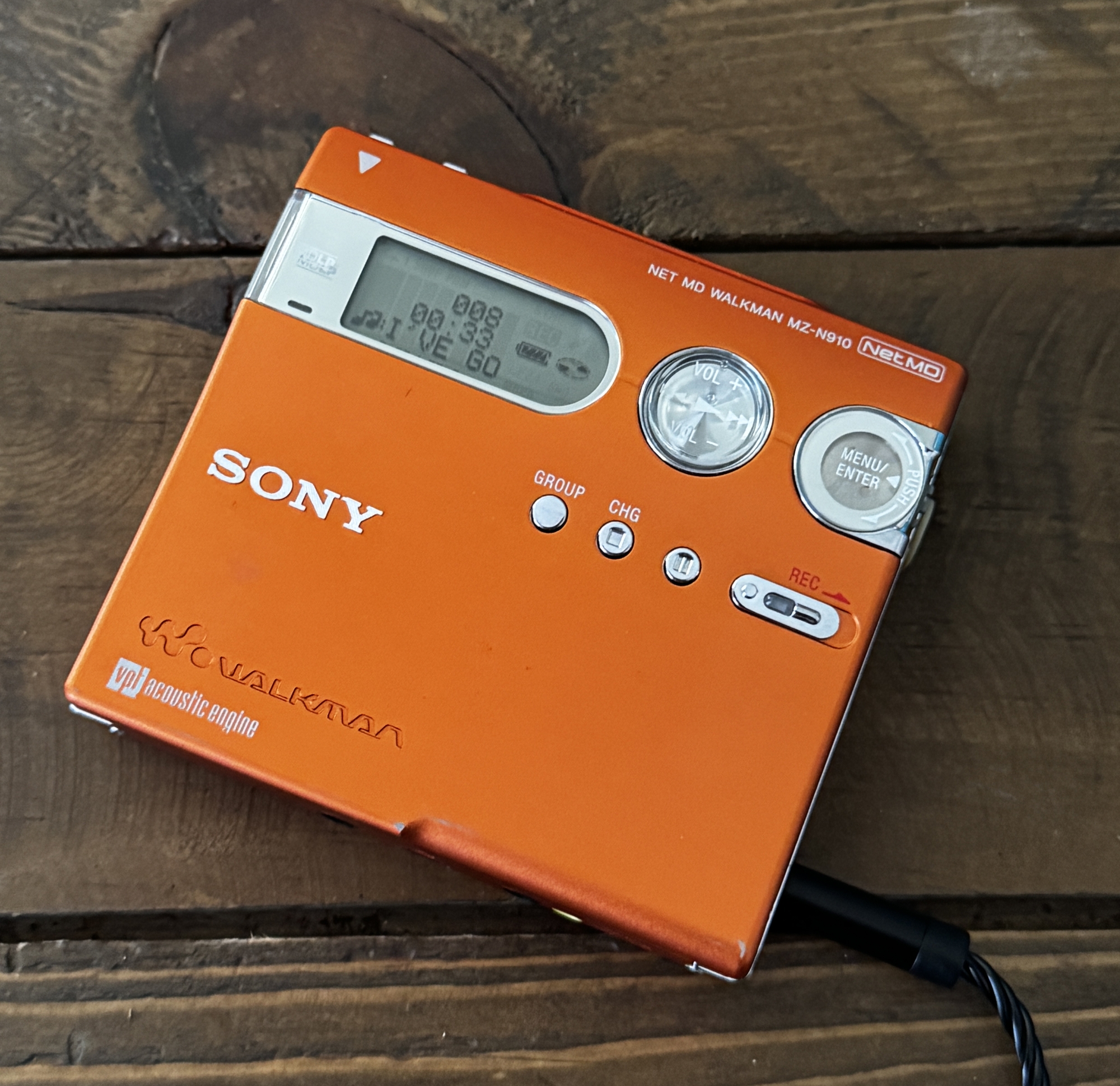

Magnetic recording first came into prominence in the 1950s. Instead of undulations on the walls of a groove molded in a nominally flat vinyl disc, there were a series of magnetic patterns laid down on very long lengths of of thin plastic tape coated on one side with a readily magnetized material. However, the system was still analog in principle, since if the 440-Hz tone was magnetically recorded, 440 cycles of magnetic flux passed by the reproducing head in playback. All analog systems—no matter what the format—suffered the same inherent problem (susceptibility to noise and distortion), and the drive for further improvement caused the development of the digital audio recorder.

Simply explained, the digital recording technique “samples” the signal, say, 50,000 tiles a second, and for each instant of sampling it assigns a digitally encoded number that indicates the relative amplitude of the signal at that moment. Even the most complex signal can be assigned one number that will totally describe it for an instant in time if the “instant” chosen is brief enough. The more complex the signal, the greater the number of samples needed to represent it properly in encoded form.

In the late Seventies and earl Eighties, digital audio tape recording proliferated on the professional level, and slightly later it also became standard for the home recordist. Many of the better home videotape recording systems were adaptable for audio recording; they either came with built-in video-to-audio switching or had accessory converters available.

The video disc, first announced in the late 1960s, progressed rapidly along its own independent path, since it benefited from many of the same technical developments as the other home video and digital products. B the mid 1980s a variety of video-disc player were available that, when fed the proper disc, could provide both large-screen video programs with stereo sound or multichannel audio with separate reverb-only channels. The fat semiconductor RV screen that was available in any size desired appeared in the early 1980s. It was the inevitable outgrowth of the light-emitting diode (LED) technology that provided the readouts for the electronic watches and calculators that were ubiquitous during the early 1970s. Later in the decade, giant-screen home video faced competition from holographic recording/playback technique. Whether the viewer preferred a three-dimensional image than was necessarily limited in size and confined (somewhat) in spatial perspective or a life-size two-dimensional one ultimately came down to the specifics of the program material. In any case, the two non-compatible formats competed for the next twenty years or so.

LSI, RAM, and ROM

By the late 1980s, the pocket computer (not calculator) had become a reality. Here too, the evolutionary trend had been clearly visible for some time. The first integrated circuits were built in the late 1950s with only one active component per “chip.” By the end of the Seventies, some LSI (large-scale integrated circuit) chips had over 30,000 components, and RAM (random-access memory) and ROM (read-only memory) microprocessor chips became almost as common as resistors in the hi-fi gear of the early 1980s. ADC’s Accutrac turntable (ca. 1976) was the first product resulting from (in their phrase) “the marriage of a computer and an audio component.” The progeny of this miscegenation was the forerunner of a host of automatic audio components that could remember stations, search out selections, adjust controls, prevent audio mishaps, monitor performance, and in general make equipment operation easier while offering greater fidelity than ever before. As a critic of the period wrote, “This new generation of computerized audio equipment will take care of everything for the audiophile except the listening.” Shortly thereafter, the equipment did begin to “listen” also, and soon any audiophile without a totally voice-controlled system (keyed, of course only to his own vocal patterns) felt very much behind the times. One could also verbally program the next selection—or the next one hundred.

“Resident” Computers

The turn of the century saw LSI chips with million-bit memories and perhaps 250 logic circuits—and the eruption of two controversies, one major and one minor. The major controversy would have been familiar to those of our ancestors who were involved in the cable-vs-broadcast TV hassles during the 197os and later. The big question in the year 2000 was the advantage of “time sharing” compared with “resident” computers for program storage.

Since the 1950s the need for fast out put and large memory-storage capacity had drien designers into ever more sophisticated devices, most of them derived from fundamental research in solid-state physics. The late 1970s, a period of rapid advances, saw the primitive beginnings of numerous different technologies, including the charge-transfer device (CTD), the surface acoustic-wave deivce (SAW), and the charge-coupled device (CCD), each of which had special attributes and ultimately was pressed into the service of sound reproduction processing and memory. The development of the technique of molecular-beam eipitaxy (which enabled chips to be fabricated by bombarding them with molecular beams) eventually led to superconductor (rather than semiconductor) LSIs and molecular –tag memory (MTM) devices. Super-fast and with a fantastically large storage capacity, the MTM chips functioned as the heart of the pocket-size ROM cartridge (or “cart” as it was known) that contained the equivalent of hundreds of primitive LP discs.

The read-only memory of the MT carts could provide only the music that had been “hard-programmed” into them. This was fine for the classical music buff, sicne it was possible to buy the complete works of, say, Bach, Beethoven, and Carter in a variety of performances all in one MT cart and still have molecules left over for the complete works of Stravinsky, Copland, Smythe, and Kuzo. However, anyone concerned with keeping his music library up to date with the latest Rama-rock releases or Martian crystal-tone productions obviously needed a programmable memory. But how would the new program get to the resident computer and in what format?

By this time, every home naturally had a direct cable to a master time-sharing computer whose memory banks were contantly being updated with the latest compositions and performances. That was just one of its minor facilities, of course, but music listeners who subscribed to the service needed only request a desired selection and it would be fed and stored in their RAM memory units. Those audiophiles who derived no ego gratification from owning an enormous library of MT carts could simply use the main computer feed directly and avoid the redundance of storing program material at home. Everyone was wired anyway, directly, to the National Computer by ultra-wide-bandwidth cable. The cable normally handled multichannel audio-video transmissions in addition to personal communication, bill-paying, voting, etc., and, of course, the Transfax printout you are now reading.

Creative Options

The other controversy mentioned, a relatively minor one, involved a question of creative aesthetics. The equalizers used by the primitive analog audiophiles provided the ability to second-guess the recording engineers in respect to tonal balance in playback. This was child’s play compared with the options provided by computer manipulation of the digitally encoded material. Rhythms and tempos of recorded material could easily be recomposed (“decomposed” in the view of some purists) to the listeners’ tastes. Furthermore, one could ask the computer to compose original works or to pervert compositions already in its memory banks. For example, one could hear Mongo Santamaria’s rendition of Mozart’s Jupiter Symphony or even A Hard Day’s Night as orchestrated by Bach or Rimsky-Korsakov. The computer could deliver such works in full fidelity—sonic fidelity, that is—without a millisecond’s hesitation.

Since Edison’s time, the major problems of high fidelity have occurred in the interface devices, those transducers that “read” the analog-encoded material from the recording at one end of the chain or converted it into sound at the other. Digital recording, computer manipulation of the program material, and the MT memory carts solved the pickup end of the problem elegantly; however, for decades the electronic-to-sonic reconversion remained terribly inexact, despite the fact that it was known for at least a century that the core of the problem lay in the need to overlay a specific acoustic recording environment on a nonspecific listening environment. Techniques such as time-delay reverb devices, quadraphonics, and biaural recording/playback, which put enough “information” into a listening environment to override, more or less, the natural acoustics, were frequently quite successful in creating an illusion of sonic reality. But it continued to be very difficult to establish the necessary psychoacoustic cues. The problem was soluble, but it was certainly not easy with conventional technology. And the necessary unconventional technology appeared only in the early years of this century.

Brain Waves

It has long been known that all the material fed to the brain from the various sense organs is first translated into a sort of pulse-code modulation. But it was only fifty years ago that the psychophysiologists managed to break the so-called “neural code.” The first applications of the neural-code (NC) converters were, logically enough, as prosthetic devices for the blind and deaf. (The artificial sense organs themselves could actually have been built a hundred years ago, but the conversion of their output signals to an encoded form that the brain would accept and translate into sight and sound was a major stumbling block.)

The NC (encee) converter was fed by micro-miniature sensors and then coupled to the brain through whatever neural pathways were available. Since rather delicate surgery was required to implant and connect the sensory transducer/converter properly, the invention of the Slansky Neuron Coupler was hailed as a breakthrough rivaling the original invention of the neural code converter. The Slansky Coupler, which enabled encoded information to be radiated to the brain without direct connection, took the form (for prosthetic use) of a thin disk subcutaneously implanted at the apex of the skull. Micro-miniature sensors were also implanted in the general location of the patient’s eyes or ears. Total surgery time was less than one hour, and upon completion the recipient could hear or see at least as well as a person with normal senses.

What has all this to do with high-fidelity reproduction? Ten years ago a medical student “borrowed” a Slansky device and with the aid of an engineer friend connected it to a hi-fi system and then taped it to his forehead. Initially, the story goes, the music was “translated”—”scrambled” would be more accurate—into color and form and the video into sound, but several hundred engineering hours later the digitally encoded program and the Slansky device were properly coupled and a reasonable analog of the program was direcly experienced.

When the commercial entertainment possibilities inherent in the Slansky Coupler became evident, it was only a matter of time before special program material became available for it. And at almost every live entertainment or sports event, hi-fi hobbyists could be seen wearing their sensory helmets and recording the material. When played back later, the sight and sound fed directly to the brain provided a perfect you-are-there experience, except that other sensory stimuli were lacking. That was taken care of in short order. Although the complete sensory recording package was far too expensive for even the advanced neural recordist, “underground” cartridges began to appear that provided a complete surrogate sensory experience. You were there—doing, feeling, tasting, hearing, seeing whatever the recordist underwent. The experience was not only subjectively indistinguishable from the real thing, but it was, usually, better than life. After all, could the average person-in-the-street ever know what it is to play a perfect Cyrano before an admiring audience or spend an evening on the town (or home in bed) with his favorite video star?

The potential for poetry—and for pornography—was unlimited. And therein, as we have learned, is the social danger of the Slansky device. Since the vicarious thrills provided by the neural-code-converter/coupler are certainly more “interesting” than real life ever is, more and more citizens are daily joining the ranks of the “encees.” They claim—if you can establish communication wit them—that life under the helmet is far superior that that experienced by the hidebound “realies.” Perhaps they are right, but the insidious pleasures of the encee helmet has produced a hard core of dropouts from life far exceeding in both number and unreachablility those generated by the drug cultures of the last century. And while the civil-liberties and moral aspects of the matter are being hotly debated, the situation is worsening daily. It is doubtful that the early audiophiles ever dreamed that the achievement of ultimate high-fidelity sound reproduction would one day threaten the very fabric of the society that made it possible.