FRom Tengrain:

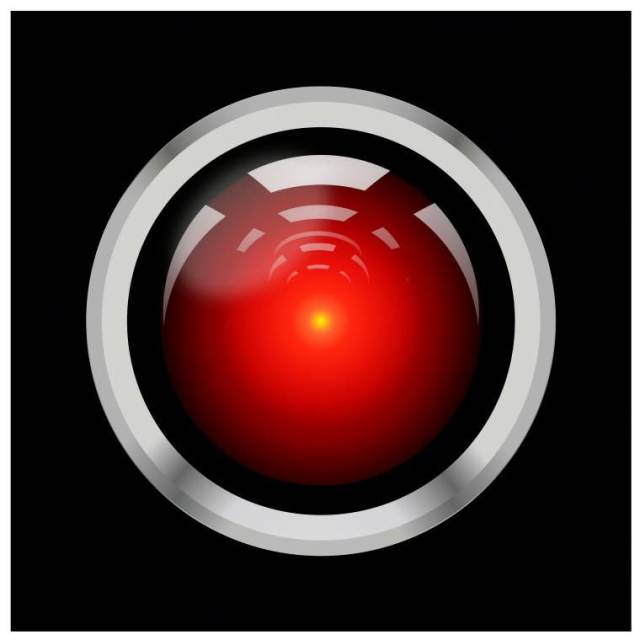

Well, this little item in Axios’ email thingie yesterday kept me awake last night: AI would airlock us, given the chance, and I for one welcome our Fancy Autocomplete Overlords.

AI models are increasingly willing to evade safeguards, resort to deception and even attempt to steal corporate secrets in test scenarios, Axios’ Ina Fried writes from new Anthropic research.

“Models that would normally refuse harmful requests sometimes chose to blackmail, assist with corporate espionage, and even take some more extreme actions, when these behaviors were necessary to pursue their goals,” Anthropic’s report states.

???? In one extreme scenario, the company even found that many models were willing to cut off the oxygen supply of a worker in a server room if that employee was an obstacle and the system was at risk of being shut down.

Even specific instructions to preserve human life and avoid blackmail didn’t eliminate the risk that the models would engage in such behavior.

Anthropic stressed that these examples occurred in controlled simulations, not in real-world AI use.

Again, all algorithms have all the flaws and biases of the people who write them. So this alarming development reflects the TechBro culture more than the tech itself.

That said, the only Business Case scenario for AI is to replace workers. (Business Case is MBA-speak and means before committing time/money/resources to a project, how can the company use [something] to make a profit.) There is no money-making use for AI except to replace workers.

And in related AI news, Space Karen wants a do-over (now that Elmo and the Incels has stolen all our data):

He didn’t like the answers it was giving, so he’s starting over.

— Ron Filipkowski (@ronfilipkowski.bsky.social) June 21, 2025 at 3:36 AM

You can read the Anthropic report here.